AI at the edge: Part two

Read part one here.

Deploying artificial intelligence at the edge introduces engineering challenges that differ significantly from traditional data center environments. Systems must deliver meaningful compute performance while operating within strict environmental and operational limits.

For organizations deploying AI in mission environments, success depends on balancing performance, efficiency, and reliability.

Edge platforms often operate on mobile or remote systems such as autonomous vehicles, tactical platforms, aircraft, ships, and remote infrastructure sites. These environments impose strict size, weight, and power (SWaP) limitations that restrict the use of traditional enterprise servers. At the same time, AI workloads continue to grow in complexity and require powerful processors and accelerators to support real-time analysis.

Delivering performance within SWaP constraints

Modern AI applications require substantial computing resources. Tasks such as sensor fusion, object recognition, predictive analytics, and real-time inferencing depend on advanced processors and specialized accelerators.

In traditional data centers, these workloads are supported by large, power-intensive servers supported by robust cooling infrastructure.

Edge platforms rarely have this luxury.

Autonomous platforms, forward installations, and remote industrial systems often operate with limited power availability and constrained physical space. Hardware must deliver high performance while remaining compact, energy efficient, and thermally stable.

Purpose-built rugged architectures address this challenge by integrating high-performance processors and accelerators into compact system designs optimized for edge deployment. These platforms provide the compute density needed for advanced AI workloads while maintaining efficiency within demanding SWaP envelopes.

Reducing latency where decisions matter most

Latency is another major factor shaping edge AI deployments.

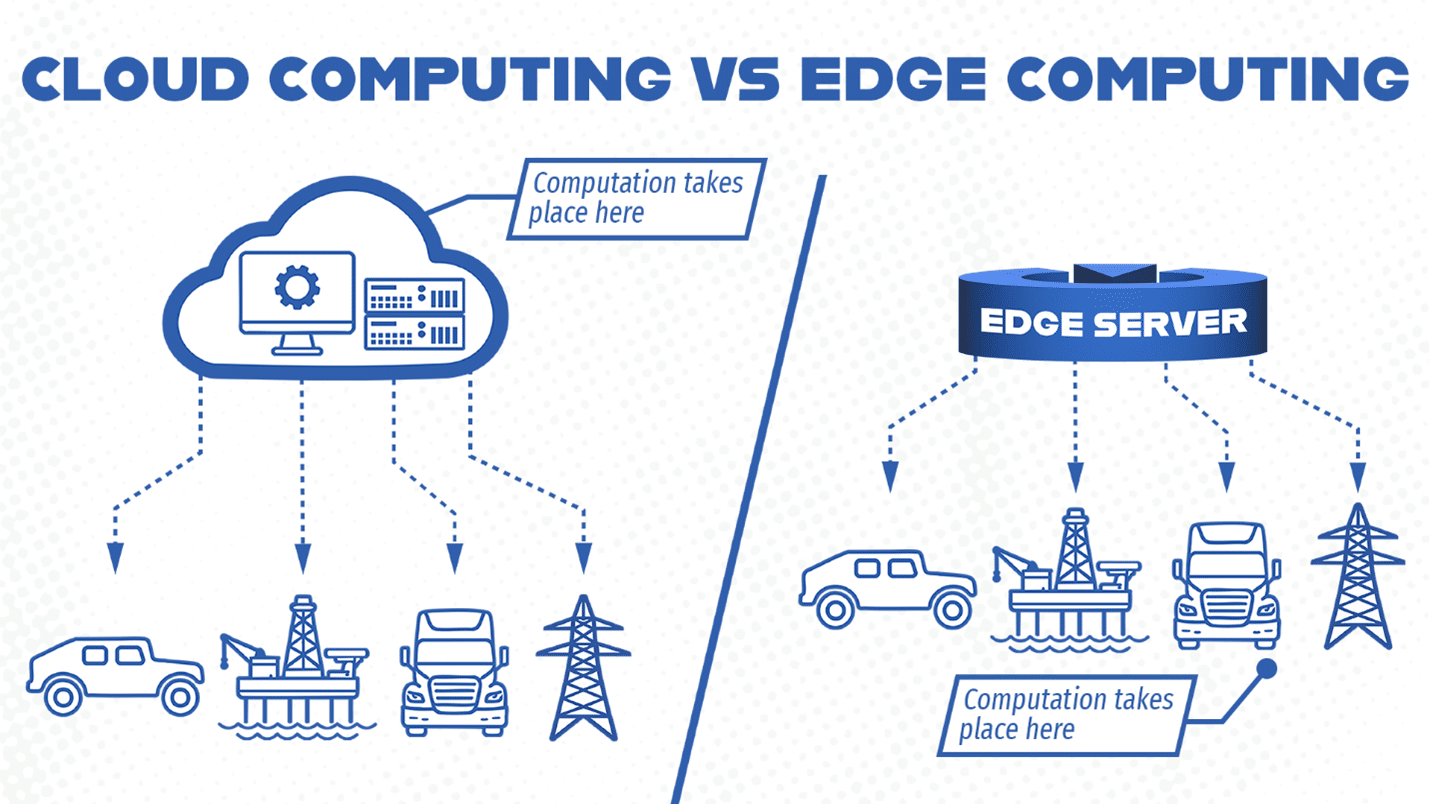

Many mission environments require immediate responses to sensor inputs or operational changes. Sending data to remote data centers for processing introduces delays that can reduce the effectiveness of AI-driven insights.

Edge computing addresses this challenge by processing data locally, enabling real-time analysis and faster decision cycles.

Local processing also reduces dependence on continuous network connectivity. This is particularly important in contested or remote environments where communications infrastructure may be limited or unreliable.

By placing compute resources directly alongside sensors and operational systems, edge architectures enable organizations to generate actionable intelligence when and where it matters most.

Building systems designed for operational realities

Deploying AI at the edge requires more than simply shrinking traditional computing platforms. Systems must be designed from the start to operate reliably in harsh environments while supporting advanced compute workloads.

This includes rugged mechanical design, efficient thermal management, secure system architecture, and reliable long-term performance.

In part three of this series, we will examine how rugged edge computing enables real-world AI deployments across defense, industrial, and infrastructure environments.