AI at the edge: Part one

Artificial intelligence is rapidly transforming how defense organizations, critical infrastructure operators, and industrial teams collect data, identify threats, and make decisions. As AI capabilities mature, many organizations are expanding beyond centralized cloud environments and deploying intelligence closer to where operations occur.

Edge AI allows organizations to process and analyze data locally instead of transmitting it to distant data centers. For operations that depend on speed and situational awareness, this approach enables faster insights, reduced latency, and greater operational autonomy. It also reduces dependence on network connectivity that may be unreliable, contested, or unavailable.

However, deploying AI at the edge requires more than relocating algorithms closer to sensors. Real-world operational environments introduce challenges that traditional computing infrastructure was never designed to handle. Delivering reliable AI performance outside controlled facilities requires rugged, purpose-built computing platforms designed for mission conditions.

The limits of centralized computing

Traditional cloud and data center architectures remain essential for large-scale training, storage, and analytics. However, many mission environments cannot rely on constant connectivity to centralized resources.

Defense operations, remote infrastructure sites, autonomous systems, and mobile platforms often operate in locations where network connectivity is intermittent, degraded, or intentionally disrupted. In these environments, transmitting large volumes of sensor data to a distant data center for analysis introduces delays that reduce the value of AI insights.

Edge computing solves this challenge by placing compute capabilities directly at the source of data. By processing information locally, organizations can generate actionable intelligence in real time.

This shift improves responsiveness and enables systems to operate more independently when network access is limited.

Real-world environments require resilient hardware

Edge deployments occur in environments that differ dramatically from controlled data centers. Systems may operate aboard aircraft, naval vessels, autonomous vehicles, or forward operating bases. Industrial systems may be deployed in remote facilities, substations, or manufacturing environments.

In these locations, computing infrastructure is routinely exposed to vibration, shock, dust, moisture, extreme temperatures, and unstable power conditions.

Commercial servers are not typically designed for these conditions. Unexpected shutdowns, thermal throttling, or hardware failures can disrupt operations and delay decision-making at critical moments.

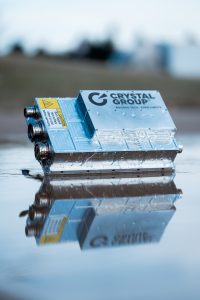

Purpose-built rugged computing platforms address these challenges by delivering reliable performance in harsh environments. These systems ensure AI workloads can continue operating even when environmental stressors would disable conventional hardware.

The foundation for operational AI

As organizations continue to adopt AI-driven capabilities, the ability to process data where it is generated will become increasingly important.

Edge AI provides the foundation for faster decision-making, improved situational awareness, and more resilient operations. However, these benefits depend on computing infrastructure designed for the realities of mission environments.

In part two of this series, we will explore the engineering challenges of deploying AI at the edge, including size, weight, and power constraints, latency requirements, and the need for resilient system design.